Cost Analysis - An x86 Massacre - Amazon's Arm-based Graviton2 Against AMD and Intel: Comparing Cloud Compute

Benchmark Scores for the Amazon Fire TV Stick 4K Max — Compared to Google Chromecast, Onn 4K, Firestick 4K, and more | AFTVnews

Benchmark Scores for the Amazon Fire TV Stick 4K Max — Compared to Google Chromecast, Onn 4K, Firestick 4K, and more | AFTVnews

Price-Performance Analysis of Amazon EC2 GPU Instance Types using NVIDIA's GPU optimized seismic code | AWS HPC Blog

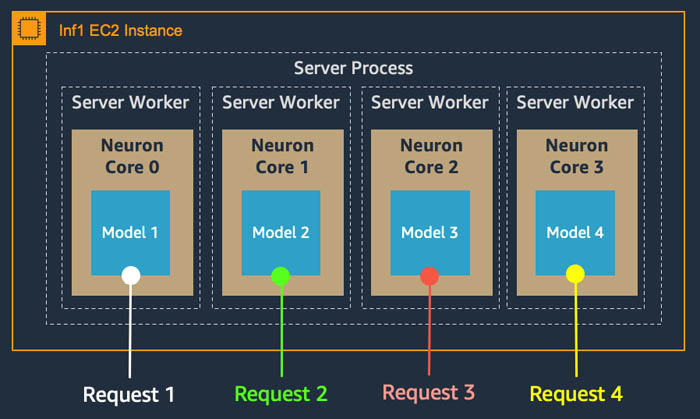

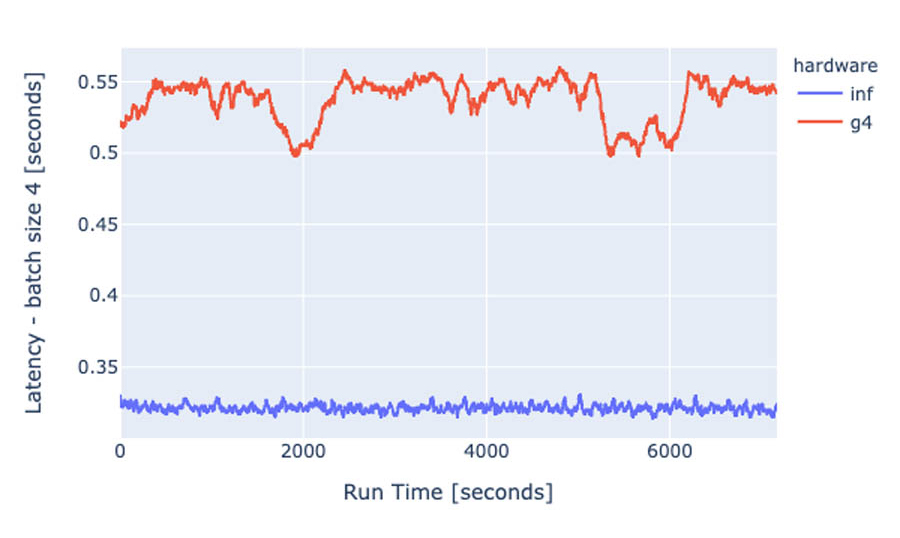

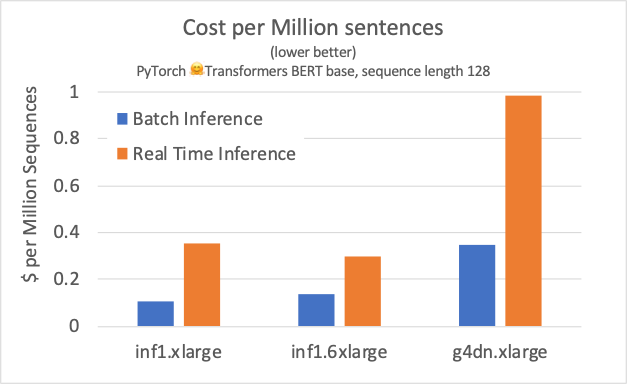

Achieve 12x higher throughput and lowest latency for PyTorch Natural Language Processing applications out-of-the-box on AWS Inferentia | AWS Machine Learning Blog

Achieving 1.85x higher performance for deep learning based object detection with an AWS Neuron compiled YOLOv4 model on AWS Inferentia | AWS Machine Learning Blog

Price-Performance Analysis of Amazon EC2 GPU Instance Types using NVIDIA's GPU optimized seismic code | AWS HPC Blog

Price-Performance Analysis of Amazon EC2 GPU Instance Types using NVIDIA's GPU optimized seismic code | AWS HPC Blog

Achieve 12x higher throughput and lowest latency for PyTorch Natural Language Processing applications out-of-the-box on AWS Inferentia | AWS Machine Learning Blog

![Best GPU Benchmarking Software [June 2023 ] - GPU Mag Best GPU Benchmarking Software [June 2023 ] - GPU Mag](https://www.gpumag.com/wp-content/uploads/2020/05/msiafterburner-screenshot-600x413.jpg)